Artificial intelligence has moved from experimentation to everyday business use. With that shift comes a new expectation: AI systems must not only perform well, but also operate in a way that is transparent, controlled, and reliable.

The EU AI Act (Regulation (EU) 2024/1689) marks a major step in that direction. The regulation sets a framework for how AI should be developed and used responsibly, not limiting the development of new solutions. For companies and their customers, this is about building confidence in how AI supports decision-making.

Understanding the EU AI Act

The EU AI Act is the first comprehensive regulatory framework for artificial intelligence in the European Union. Under the Act, AI systems are classified based on the level of risk they pose, and obligations are applied accordingly.

The risk-based model ranges from unacceptable risk (less than 1% of AI solutions) into minimal risk (around 70%). Compliance is based on where:

- Unacceptable risk

- High risk

- Limited Risk

- Minimal Risk

The goal is not to slow down adoption, but to ensure that AI systems are safe, traceable, and accountable throughout their lifecycle.

Why does the EU AI Act matter now? Organizations are preparing for stricter requirements around documentation, monitoring and governance. AI is not a standalone capability but a part of core operations. As a result, expectations are changing:

- Decisions supported by AI must be explainable

- Systems must be monitored beyond initial deployment

- Responsibilities must be clearly defined across providers and users

In short, AI needs to stand up to scrutiny in real-world conditions.

The EU AI Act: what it means for Spacewell

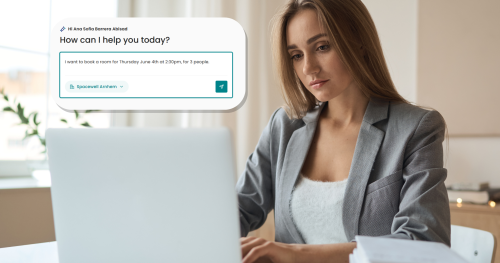

Spacewell delivers AI-driven insights across buildings, energy, and workplace environments, areas where decisions have direct operational and financial impact. That context makes reliability essential.

The regulation introduces a more structured approach to how AI systems are managed from Spacewell:

- Documentation and Regular Monitoring: Ongoing supervision of the functioning of our algorithms and regular performance validation.

- Human Oversight: Human oversight mechanisms are in place to record incidents and ensure systems function as intended.

- Cybersecurity: We are guided by rigorous technical standards, including ISO 27001, to protect our systems and customer data.

For customers, this means greater confidence in the tools they use every day. AI is integrated in many aspects of our solutions when it provides better results than human or manual analysis.

Compliance with the EU AI Act

The direction set by the EU AI Act aligns with a broader shift in the market. Organizations are moving beyond asking what AI can do, and focusing instead on how reliably it can support their business.

For Spacewell, this is not a change in direction, but a continuation of how solutions are designed and delivered.

By combining advanced analytics with strong governance and clear accountability, we ensure that customers can use AI with confidence, knowing it supports decisions in a consistent and responsible way.

The EU AI Act signals a new phase for artificial intelligence in Europe. It sets clear expectations for how systems should perform in terms of results and how those results are achieved.

If you want to know more about our Compliance and Security of our software, visit the Platform & Security page here.